AI supercomputers combine HPC, specialized processors, and cloud infrastructure to train massive AI models, enabling breakthroughs in healthcare, climate, finance, and autonomous systems.

Abstract

Artificial intelligence (AI) has reached a stage where the ability to compute defines the rate of innovation. AI supercomputers signify the intersection of high-performance computing (HPC), specialized AI hardware, and scalable cloud infrastructure to train and deploy more complex models. Massive datasets can be processed using these systems, and scientific breakthroughs can be made in healthcare, climatology, autonomous systems, and financial modelling. The rapid growth of AI workloads has further spurred investments in supercomputing hardware across the world, with nations racing to build the most formidable machines. With the increase in computing power to exascale and even larger powers, energy efficiency, accountability, and performance optimization are of key concern. This report describes the history and future of the AI supercomputing ecosystem, including technological advancements, international rivalry, architecture, and future perspectives.

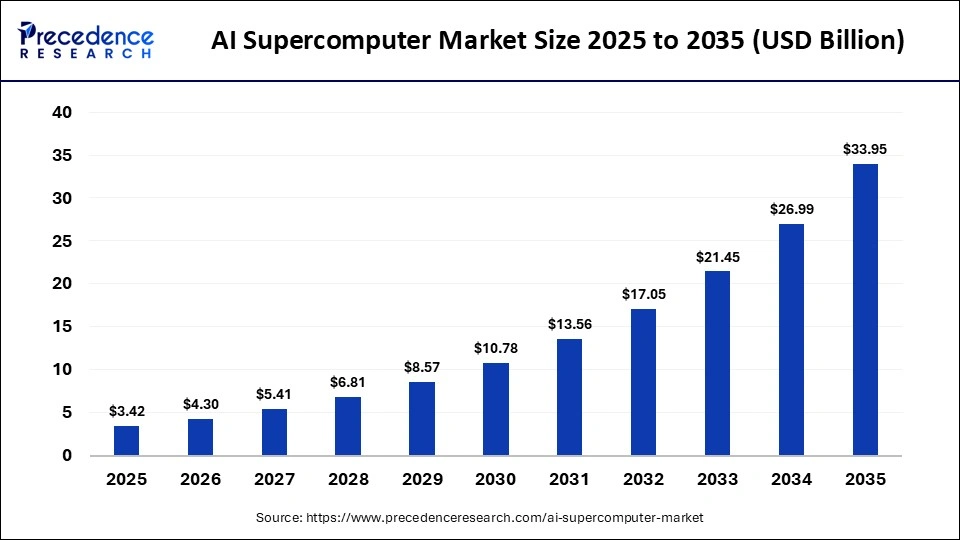

What is the AI Supercomputer Market Size in 2026?

The global AI supercomputer market size was calculated at USD 3.42 billion in 2025 and is predicted to increase from USD 4.30 billion in 2026 to approximately USD 33.95 billion by 2035, expanding at a CAGR of 25.80% from 2026 to 2035

Executive Summary

Artificial Intelligence supercomputers have now become a major infrastructure in the development of modern AI. These systems are a combination of special-purpose processors, high-speed interconnects, and high-speed cooling to serve large machine learning workloads. AI supercomputing is becoming increasingly competitive on a global front, with the U.S. and China dominating in terms of investments in HPC infrastructure. The industry is shifting away from centralized monolithic supercomputers to distributed and cloud-based architectures that can dynamically scale workloads.

A significant design consideration that is set to emerge is energy efficiency and sustainability due to the massive power demands of AI training clusters. Secondly, transparency and accountability have been raised, leading to novel frameworks like AI forensic tracking and algorithmic auditing. In the future, AI supercomputing is expected to transform with the development of processor architecture, modular cluster design, and quantum-accelerated computing.

Setting the Stage: A Compelling Introduction

The rapid development of AI has made computational demands to train and run large-scale models. AI supercomputers are a type of specialized HPC system that is capable of computing enormous amounts of data and performing trillions of calculations per second. In contrast to classic supercomputers, AI-oriented systems are tailored to deep learning workloads with special accelerators, including GPUs and custom AI processors. These infrastructures provide support to critical applications in health care, climate, financial modeling, and autonomous systems. The computing power of supercomputers is becoming a strategic asset, as organizations are increasingly dependent on AI-based insights. AI supercomputers are thus changing innovation and economic competition globally.

Emerging AI Technologies for Supercomputing

The AI workload has been increasing exponentially over the last ten years due to the growth of machine learning models and access to big datasets. Traditional AI models did not need large computing capabilities; however, current models may include hundreds of billions of parameters. This has generated unprecedented interest in HPC infrastructure. National research laboratories and technology companies are creating large-scale AI clusters that can provide exascale performance. The rapid development of AI supercomputers is changing the research opportunities and speeding up scientific discovery.

A Global Landscape of AI Supercomputers

The U.S. has always been at the forefront of the world in terms of supercomputing, with massive investments in research and state-of-the-art computing infrastructures. Some of the most powerful AI supercomputers in the world are still being developed in national laboratories and technology companies. Government initiatives facilitate the collaboration of academia, industry, and public research institutions. These programs increase breakthroughs in climate modelling, genomics, and advanced AI development. The nation is still at the forefront of AI software ecosystems and hardware innovation.

China has been quick to develop AI supercomputing with government funding and national research projects. The nation has incurred a lot of investment in the development of supercomputing infrastructure and chips within the country. Chinese organizations are constructing high-performance systems, enabling them to conduct research on AI models, national security, and advanced manufacturing. These are a result of China being strategic in its technological independence and AI leadership. China is among the competitors that have been overtaking in the supercomputing race in the world.

Energy Matters: Unplugging the Myths of Power Consumption

The power resources required by an AI supercomputer are tremendous because of the huge number of processors and memory systems running concurrently. The electricity consumption of deep learning clusters may hit hundreds of megawatts. This leads to the innovation of new cooling systems and power-efficient chip architectures. The use of renewable energy to counter environmental effects is also becoming a trend among data center operators. Performance has traditionally been one of the main concerns of the modern AI computing infrastructure, reducing power use at the same time.

AI Blackbox: The Cockpit of the Supercomputer

Large machine learning models present a significant issue due to their black box characteristics in one of the biggest problems in large AI systems. Bigger and more advanced AI systems are difficult to understand in the way in which they make certain decisions. This is not transparent enough to raise issues of reliability, fairness, and compliance with regulations. Explainable AI methods are under research to give an understanding of how model decisions are made. The key to autonomous AI-driven systems is to increase transparency to create trust.

The black box nature of large AI models also complicates debugging and error correction, making it difficult to identify and rectify mistakes effectively. Additionally, it hampers the ability to improve models over time, as understanding their decision-making processes is essential for targeted enhancements. This opacity can also lead to ethical concerns, especially when AI systems are used in sensitive areas like healthcare, finance, or criminal justice, where accountability and moral responsibility are critical.

Forensic Accountability of AI: Key Pointers

- Understanding AI Forensics: AI forensics involves techniques and methodologies used to analyze AI systems to ensure transparency and traceability in their decision-making processes.

- Importance of Transparency: Transparency in AI models allows stakeholders to understand how decisions are made, thus fostering trust and confidence in AI systems.

- Algorithmic Auditing: Regular audits of AI algorithms are essential for identifying biases, inaccuracies, or unethical behavior, ensuring that systems operate within ethical and legal boundaries.

- Data Provenance: Maintaining a record of data sources, transformations, and usage helps ensure the integrity and reliability of AI outputs, making it easier to trace back issues if they arise.

- Model Explainability: Ensuring that AI models provide understandable explanations for their outputs is crucial for accountability, especially in high-stakes situations like healthcare or law enforcement.

- Regulatory Compliance: Adhering to regulations and standards established by industry bodies and governments helps maintain accountability and can shield organizations from legal repercussions.

- Incident Reporting Mechanisms: Establishing protocols for reporting and responding to AI-related incidents enhances accountability by ensuring that organizations learn from mistakes.

- Stakeholder Involvement: Engaging a diverse group of stakeholders, including ethicists, consumers, and technologists, in the development process can lead to more robust accountability frameworks.

Cloud-Based and Distributed Supercomputing

Traditional supercomputers were centralized computers that were housed in research laboratories or government offices. Currently distributed architectures enable organizations to link computing resources at more than one data center. The use of cloud computing platforms to offer scalable AI infrastructure is becoming increasingly significant. Businesses are no longer required to develop their physical infrastructure to have access to supercomputing capabilities.

- Capability vs Capacity: There are usually two crucial measures used in the performance assessment of supercomputers, and they are capability and capacity. Capability is the specific functionality of the system to tackle odd complex problems that involve maximum computational energy. Capacity refers to a functionality of the system to handle massive amounts of work that it can handle within a period. The best opportunity to use a system in the most effective way is to strike the best balance between these two factors.

- Green Building and Energy Saving: High energy consumption is one of the key problems related to AI supercomputing. The electricity used to train large AI models may be massive. Energy efficiency of computing clusters is important as clusters grow to be cost-effective in operations and environmentally friendly. Data center operators are moving towards liquid cooling and processor design with low energy consumption. The integration of renewable energies is also emerging as a significant approach towards the minimization of the carbon footprint of AI infrastructure.

Challenges Associated with AI Supercomputers

With the complexity of AI models, the debate on transparency and accountability is on the rise. Most AI systems are used in the form of black boxes, and it is hard to understand how they make decisions. To overcome this dilemma, scholars are coming up with models regarding AI auditing and forensic examination. These mechanisms can also be used like a flight recorder, where operating data can be saved up and analyzed in case of system malfunctions or unforeseen consequences.

AI Supercomputing: The Next Frontier

The AI supercomputing market is predicted to undergo a fast growth in the decade. Progress in semiconductor technology and distributed computing architectures will bring the computational capacity far up. The industry can also be transformed by the emergence of such technologies as neuromorphic computing and quantum processors. Meanwhile, energy efficiency and sustainability will be critical in sustaining the computing infrastructure in large-scale computing.

Conclusion

AI supercomputers are rapidly emerging as the foundation of the global AI ecosystem. Their capacity to handle large volumes of data and learn very complicated models is facilitating novel innovations in the industries. Investments in advanced computing infrastructure will keep picking up as the competition between countries and technology firms increases. Solving the energy consumption, transparency, and scalability issues will be essential to the growth of the industry in the long-term perspective. The final, but not least, AI supercomputers will make a decisive contribution to the creation of digital innovation and scientific discovery of the future.

Expert Advise

According to the Precedence Research, AI supercomputers are emerging as a pivotal tool in the technology sector. Governments are investing heavily to establish a robust infrastructure for AI supercomputers in their respective nations. The demand for AI supercomputers is anticipated to rise at a burgeoning rate in the coming years, owing to their widespread use across various sectors, including healthcare, energy, climate modelling, and finance. Adapters can accelerate their tasks, reducing time and manual errors, especially in complex calculations.

About the Authors

Aditi Shivarkar

Aditi, Vice President at Precedence Research, brings over 15 years of expertise at the intersection of technology, innovation, and strategic market intelligence. A visionary leader, she excels in transforming complex data into actionable insights that empower businesses to thrive in dynamic markets. Her leadership combines analytical precision with forward-thinking strategy, driving measurable growth, competitive advantage, and lasting impact across industries.

Aman Singh

Aman Singh with over 13 years of progressive expertise at the intersection of technology, innovation, and strategic market intelligence, Aman Singh stands as a leading authority in global research and consulting. Renowned for his ability to decode complex technological transformations, he provides forward-looking insights that drive strategic decision-making. At Precedence Research, Aman leads a global team of analysts, fostering a culture of research excellence, analytical precision, and visionary thinking.

Piyush Pawar

Piyush Pawar brings over a decade of experience as Senior Manager, Sales & Business Growth, acting as the essential liaison between clients and our research authors. He translates sophisticated insights into practical strategies, ensuring client objectives are met with precision. Piyush’s expertise in market dynamics, relationship management, and strategic execution enables organizations to leverage intelligence effectively, achieving operational excellence, innovation, and sustained growth.

Request Consultation

Request Consultation