Model Evaluation and Benchmarking Tools Market Revenue to Attain USD 9.57 Bn by 2035

Model Evaluation and Benchmarking Tools Market Revenue and Trends 2026 to 2035

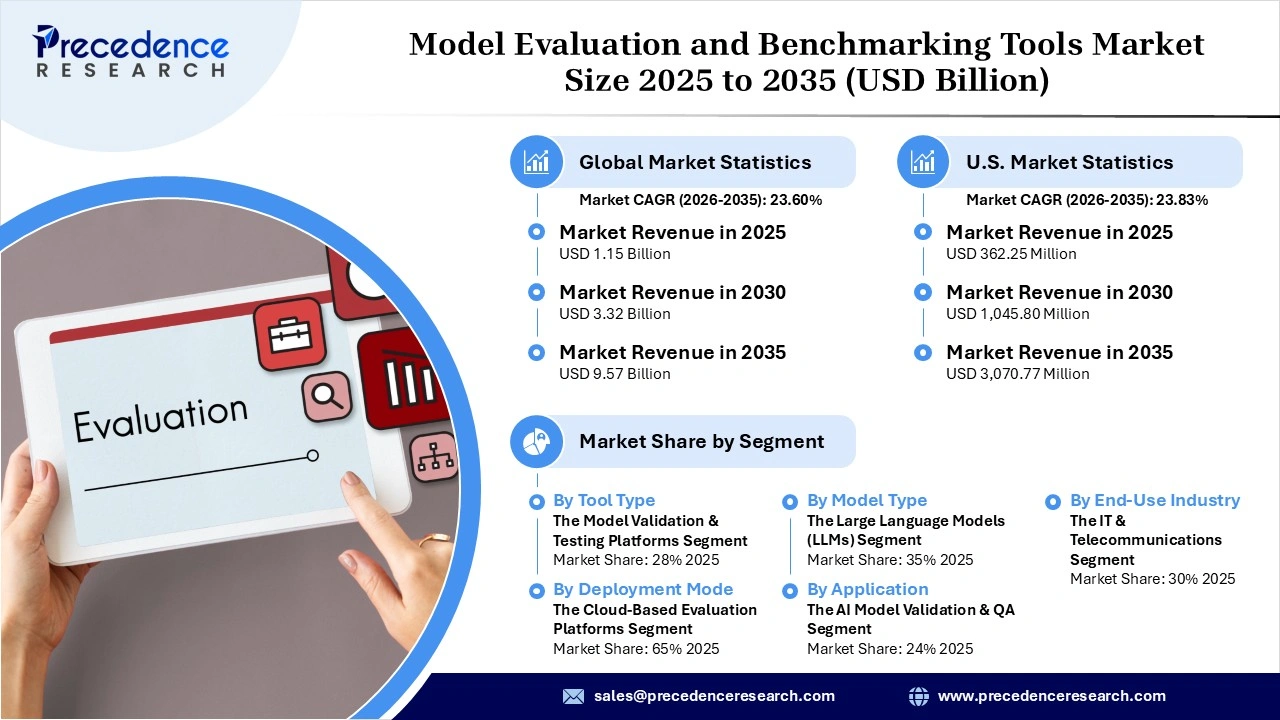

The global model evaluation and benchmarking tools market revenue was valued at USD 1.15 billion in 2025 and is expected to attain around USD 9.57 billion by 2035, growing at a CAGR of 23.60% during forecast period. The market is driven by the increasing adoption of AI models in various industries, the need to guarantee accuracy and reliability prior to implementation, and the growing need to evaluate AI models’ performance standards.

Market Overview

The model evaluation and benchmarking tools market comprises solutions that examine, validate, and benchmark the performance of AI and machine learning algorithms before and after implementation. It encompasses a range of solutions designed to measure the accuracy, reliability, safety, fairness, and efficiency of the models in use. This market includes benchmarking software solutions, validation platforms, monitoring tools, and consulting services that help assess algorithm quality.

Validation and monitoring tools also detect anomalies and ensure a consistent level of model performance. These tools are implemented at various stages within the life cycle of the AI application, ranging from the model training stage to the operational stage. It offers solutions for various industries, enabling them to implement AI solutions by meeting all necessary standards and achieving the required results.

What are the Major Factors Driving the Market?

Emergence of Real-Time AI Model Monitoring Tools

The model evaluation and benchmarking tools market is benefiting from recent innovations, such as systems for real-time monitoring and evaluation of AI models to help firms automatically assess them in real time throughout their entire lifecycle. Some of the benefits of such systems include automation of evaluation procedures, fast validation, and the incorporation of testing into the pipeline.

- In 2025, Microsoft unveiled some of its newly developed scoring products in its Azure AI product portfolio, which also includes a new Evaluation API.

Adoption of Synthetic Data for Testing Models

The emerging trend in the market is the adoption of synthetic data that makes it possible for companies to validate their AI models without solely depending on real-world data. The advantage of this technique is that synthetic data can help simulate edge case scenarios, complex environments, and critical operations that cannot be easily replicated using manual methods.

- In 2026, NVIDIA launched the blueprint of its open Physical AI Data Factory, a tool that allows significant synthetic data generation and automatic testing of AI models.

Government Programs Promoting Responsible AI Testing

Governments across the world have been introducing national AI policies, testing procedures, and safety standards, which would promote responsible validation and deployment of the AI technology platforms. Several governments have allotted funds to develop structured validation systems and guidelines to guarantee that AI systems are safe, transparent, and reliable before permitting them to be released in the market.

- In 2026, the Government of India launched a national AI testing platform for healthcare services, which is intended to provide standards for authorization and ensure that AI-powered healthcare systems are deployed safely.

Development of Large-Scale Multilingual AI Validation Frameworks

Samsung unveiled an AI evaluation framework, TRUEBench, that includes more than 2400 real life test cases divided into 10 categories of tasks and performed in 12 different languages. The employment of these large-scale AI testing platforms facilitates more accurate benchmarking and provides greater consistency in assessing AI model performance in diverse applications.

Rising Standardization and Scaling of AI Evaluation Frameworks Driving the Model Evaluation and Benchmarking Tools Market

- As the Stanford Human-Centered AI Institute AI Index Report 2025 suggests, global enterprises screenshotting AI systems said that, by 2024-2025, they were incorporating formal evaluation into their workflows, representing a 78% rise in AI practice institutionalization relative to 2023, almost 60% of companies were already integrating a formal evaluation model.

- Stanford Human-Centered AI Institute AI Index Report 2025 states that the magnitude of the training datasets in AI benchmarking setups has been doubling approximately every eight months, representing a swift augmentation of the expressions of scale in model testing.

- A study released by Nature in 2025 found more than 790 measures of responsible AI evaluation in the world, of which around 45% are specifically related to avoiding fairness and bias, and thus, it is the most prevalent category of AI evaluation indicators.

- According to Stanford Human-Centered AI Institute AI Index Report 2025, by 2024-2025, more than 65% of advanced AI models to be evaluated would have been evaluated based on fairness, accountability, and transparency, compared to less than 50% in previous years.

Market Segmentation Overview

- By Tool Type: The model validation & testing platforms segment led the model evaluation and benchmarking tools market with a 28% share in 2025. This is because these platforms are important for evaluating model accuracy and identifying weaknesses, resulting in their widespread adoption by companies developing AI solutions.

- By Tool Type: The bias, fairness & risk evaluation tools segment is expected to expand at the highest CAGR during the forecast period, due to growing concerns over the ethical use of AI and the increasing demand to identify algorithm bias, maintain transparency, and compliance with regulations in AI implementation.

- By Deployment Mode: The cloud-based evaluation platforms segment dominated the market with a 65% share in 2025, owing to their capability to offer scalable computing power, appropriate datasets for analysis, and smooth integration with the AI development pipeline, allowing companies to conduct tests on AI models without spending much on infrastructure.

- By Deployment Mode: The on-premise model testing tools segment held the second-largest market share of 20% in 2025 because of their significant adoption in companies with sensitive information, thus requiring robust security controls and customizations.

- By Application: The AI model validation & QA segment led the model evaluation and benchmarking tools market with a 30% share in 2025, due to its significance in checking for the accuracy and reliability of AI models and providing useful feedback, making it a vital component for companies to achieve consistent and dependable AI applications.

- By Application: The regulatory compliance & AI governance segment is expected to grow at the fastest rate from 2026 to 2035, owing to the growing focus on policies and regulations related to AI development and ongoing oversight of AI platforms' operations to maintain transparency and ethical operations.

- By End-Use Industry: The IT & telecommunications segment dominated the market with a 30% share in 2025, because of its early adoption of AI technologies in network management, consumer analysis, and automation software systems. This created an inherent necessity for regular testing and benchmarking of models.

- By End-Use Industry: The automotive & mobility segment is expected to expand at the highest CAGR during the forecast period, due to the increased implementation of AI in autonomous vehicles, driver assistance features, and smart mobility applications that mandate the testing of AI platforms in real-time to ensure their safety and reliability.

Regional Analysis

North America led the model evaluation and benchmarking tools market with a 42% share in 2025, as it has a well-established AI model development ecosystem and widespread adoption of model validation tools among the tech-driven organizations. The U.S. led the market in North America due to a significant presence of prominent AI technology organizations and innovations in enterprise AI solutions. Canada is witnessing significant market growth because of significant investments in AI governance frameworks.

Asia Pacific is expected to expand at the highest CAGR during the forecast period, owing to the rapid adoption of AI in various sectors such as manufacturing, healthcare, and financial services. China led the market in Asia Pacific due to the widespread implementation of AI and the significant importance given to the verification of the performance of AI algorithms. India is a significant contributor to the market because of high adoption rates of AI solutions by businesses and technology organizations.

Model Evaluation and Benchmarking Tools Market Coverage

| Report Attribute | Key Statistics |

| Market Revenue in 2025 | USD 1.15 Billion |

| Market Revenue by 2035 | USD 9.57 Billion |

| CAGR from 2026 to 2035 | 23.60% |

| Quantitative Units | Revenue in USD million/billion, Volume in units |

| Largest Market | North America |

| Base Year | 2025 |

| Regions Covered | North America, Europe, Asia-Pacific, Latin America, and the Middle East & Africa |

Top Companies in the Model Evaluation and Benchmarking Tools Market

Microsoft Corporation, Google LLC (Alphabet Inc.), Amazon Web Services, Inc. (AWS), and IBM Corporation are some of the key players that have embedded AI and machine learning model evaluation within their respective cloud platforms. Model evaluation frameworks and leaderboards are offered by OpenAI Inc., Hugging Face Inc., Weights and Biases Inc., ClearML Ltd., and Domino Data Lab Inc., which are known for model validation and experiments tracking in MLOps environments. Scale AI Inc. and DataRobot Inc. are known for automating the evaluation process for large language models (LLMs) and enterprise AI models.

Segments Covered in the Report

By Tool Type

- Model Validation & Testing Platforms

- Benchmarking Frameworks (LLM Benchmarks, Vision Benchmarks)

- Explainability & Interpretability Tools (XAI)

- Bias, Fairness & Risk Evaluation Tools

- Performance Monitoring & Drift Detection Tools

By Deployment Mode

- Cloud-based Evaluation Platforms

- On-premise Model Testing Tools

- Hybrid Evaluation Environments

By Model Type

- Large Language Models (LLMs)

- Computer Vision Models

- Speech & Multimodal Models

- Predictive & Classical ML Models

By Application

- AI Model Validation & QA

- Regulatory Compliance & AI Governance

- Model Performance Optimization

- Continuous Monitoring & MLOps Integration

- Benchmarking for Model Selection & Procurement

By End-Use Industry

- IT & Telecommunications

- BFSI

- Healthcare

- Retail & E-commerce

- Automotive & Mobility

- Government & Defense

- Others

By Region

- North America

- Latin America

- Europe

- Asia-pacific

- Middle and East Africa

Get this report to explore global market size, share, CAGR, and trends, featuring detailed segmental analysis and an insightful competitive landscape overview @ https://www.precedenceresearch.com/sample/8326

You can place an order or ask any questions, please feel free to contact us at sales@precedenceresearch.com |+1 804 441 9344