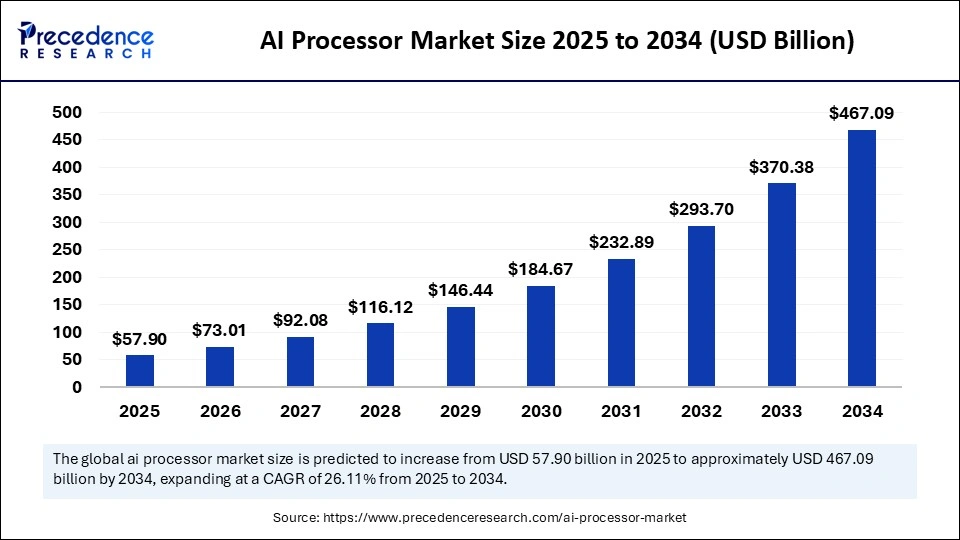

What is the AI Processor Market Size?

The global AI processor market size is calculated at USD 57.90 billion in 2025 and is predicted to increase from USD 73.01 billion in 2026 to approximately USD 467.09 billion by 2034, expanding at a CAGR of 26.11% from 2025 to 2034. The AI processor market is driven by rising adoption of AI technologies across industries, increased data processing needs, and advancements in chip architectures.

Market Highlights

- North America led the AI processor market with around 46.4% of the market share in 2024.

- Asia Pacific is expected to expand the fastest CAGR in between 2025 and 2034.

- By processor type, the GPU (graphics processing unit) segment held approximately 35.4% of the market share in 2024.

- By processor type, the NPU (neural processing unit) segment is growing at a double-digit CAGR of 21.5% Between 2025 and 2034.

- By deployment mode, the cloud / data center segment captured the highest market share of 65.4% in 2024.

- By deployment mode, the edge / on-device segment is expanding with the highest CAGR from 2025 to 2034.

- By application, the consumer electronics segment held the major market share of 37.4% in 2024.

- By application, the automotive segment is poise to grow at a notable CAGR between 2025 and 2034.

- By end-user industry, the IT & telecom segment captured the biggest market share of 34.4% in 2024.

- By end-user industry, the automotive & industrial segment is expected to expand at a notable CAGR from 2025 to 2034.

Powering the Future: The Surge of AI Processors

More and more applications of artificial intelligence are found in many industries self-driving vehicles, smart devices creating more demand for faster performing processors at lower power levels.AI processors are developed systems that focus on specialized chips to accelerate specific AI workloads for example, machine learning and deep neural net algorithms as a category of processor that can allow data to traverse through the computer faster allowing for real-time decision making.

The demand is being fueled by increased use of AI in the cloud, rising trends in edge computing, and greater complexity in AI models. As top companies build systems for AI-enabled process automation and intelligent analytics, these processors have facilitated the digital transformation objectives of many segments of the commercial ecosystem, driving higher computing performance across sectors.

Key Technological Shifts

Transforming Computational Power: New Developments in the AI Processor Market

The AI processor market is experiencing rapid growth driven by the need for increased computational speed, lower latency, and greater efficiency in AI-based applications. New generation chip architectures, such as neuromorphic and heterogeneous structures, have radically changed processing performance by simulating the human brain and performing multiple tasks at high speeds. Such developments support edge devices to process complex, AI-heavy workloads directly, rather than relying heavily on cloud-based processing.

In addition, leading technology firms are using advanced nodes, such as 3nm and 5nm fabrication technologies in chips to manage performance with lower power requirements for the application. Specialized chip accelerators for AI and quantum processing integration, along with chiplet-based modular architectures, further change the market terrain. When combined with AI, high-bandwidth memory (HBM) and new interconnects prepare the field for expanded possibilities in autonomous vehicles, robotics, and data centers. Overall, these developments prepare for a new age of intelligent, efficient, and adaptive computing systems.

Market Trends

- Edge AI Surfaces to the Forefront: AI processing is advancing from centralized clouds to the edge of devices, where latency is low and performance is fast. Demand for chips that handle real-time, on-device intelligence effectively is being driven by smartphones, autonomous cars, and Internet of Things (IoT) systems.

- Custom Chips Redefine Performance: Tech giants are creating domain-specific processors that replace traditional graphics processing unit (GPU). Technologies such as neural processing units (NPUs) and application-specific integrated circuits (ASICs) can optimize speed and energy use, and give businesses a competitive advantage in AI workloads focused on specialized tasks, like vision and speech.

- Efficiency Becomes the New Metric: Manufacturers are repurposing engineering to improve performance-per-watt and reduce hardware costs. Energy-efficient processors are dominating product roadmaps as sustainability goals and operational cost reductions rise to the top of AI hardware deployment priorities.

- Governments Establish Independence from Chips: In light of worldwide chip supply issues, countries are investing heavily in locally producing AI processors. National initiatives include self-sufficiency, data sovereignty, and the development of domestic semiconductor ecosystems that are independent of other countries.

- Training and Inference Converge: The distinction between training chips and inference chips is becoming less distinct. Much new architecture deliberately balances training and inference capabilities to support scalable deployments of large models across sectors such as health, finance, and automotive.

AI Processor Market Outlook

[[market_outlook]]

Market Scope

| Report Coverage | Details |

| Market Size in 2025 | USD 57.90 Billion |

| Market Size in 2026 | USD 73.01 Billion |

| Market Size by 2034 | USD 467.09 Billion |

| Market Growth Rate from 2025 to 2034 | CAGR of 26.11% |

| Dominating Region | North America |

| Fastest Growing Region | Asia Pacific |

| Base Year | 2025 |

| Forecast Period | 2025 to 2034 |

| Segments Covered | Processor Type, Deployment Mode, Application, End-User Industry, and Region |

| Regions Covered | North America, Europe, Asia-Pacific, Latin America, and Middle East & Africa |

AI Processor Market Segment Insights

[[segment_insights]]

AI Processor Market Regional Insights

[[regional_insights]]

AI Processor Market Value Chain

[[value_chain]]

AI Processor Market Companies

[[market_company]]

Other Players in the Market

- Advanced Micro Devices, Inc. (AMD): AMD offers a broad portfolio of AI processors and accelerators, including the Instinct MI300X and MI300A for high-performance computing and generative AI workloads, as well as Ryzen AI 300 and Ryzen 8000 Series Processors with integrated NPUs for edge and consumer devices. The company architecture emphasizes power efficiency, scalability, and AI-optimized compute performance across data center and client platforms.

- Qualcomm Technologies, Inc.: Qualcomm powers on-device AI and edge computing through its Snapdragon Platforms for mobile, PC, XR, and automotive applications. Key technologies include the Qualcomm Hexago NPU, Adreno GPU, and Kryo/Oryon CPU, enabling real-time AI processing and energy-efficient performance for connected devices.

- Google LLC (Alphabet Inc.): Google designs Tensor Processing Units (TPUs) for data center-scale AI model training and inference and Google Tensor chips for mobile devices. Its custom silicon is optimized for deep learning, language models, and generative AI, providing vertical integration across cloud and consumer AI ecosystems.

- Apple Inc.: Apple integrates AI and machine learning acceleration into its proprietary Apple Silicon (M-series, A-series) chips, featuring Neural Engines that enhance on-device AI tasks such as image processing, speech recognition, and predictive modeling. Apple focus on edge AI and privacy-preserving intelligence supports seamless AI experiences across its hardware ecosystem.

- Samsung Electronics Co., Ltd.: Samsung develops Exynos processors and AI accelerators with integrated Neural Processing Units (NPUs) for smartphones, IoT, and edge computing. The company semiconductor division also manufactures AI chips for third parties, combining memory technology and AI compute leadership for hybrid architectures.

- Huawei Technologies Co., Ltd: Huawei produces Ascend AI processors for data centers and Kirin AI chipsets for mobile devices under its HiSilicon division. Its MindSpore AI framework integrates with Ascend hardware to deliver end-to-end AI infrastructure, positioning Huawei as a leader in AI-enabled cloud and edge ecosystems.

- IBM Corporation: IBM focuses on AI hardware innovation through its AIU (Artificial Intelligence Unit) and IBM Telum processors, designed for enterprise-grade AI inference and analytics. Its hardware and hybrid cloud solutions power Watson AI and quantum-class computing, emphasizing efficiency and reliability in business AI workloads.

- MediaTek Inc.: MediaTek integrates AI processing units (APUs) within its Dimensity chipset series for smartphones, IoT devices, and automotive systems. Its NeuroPilot AI technology enables on-device intelligence, camera enhancement, and contextual processing for consumer-grade AI experiences.

- Amazon Web Services (AWS): AWS develops Inferentia and Trainium processors to power AI training and inference within its cloud infrastructure. These chips are purpose-built for large-scale model deployment, offering cost-efficient and high-performance compute for generative AI and deep learning workloads.

- Tesla, Inc: Teslas Dojo processor is designed for AI training in autonomous driving systems, enabling high-throughput computation for vision and neural network training. The companys in-house AI chip development supports vertical integration of hardware and software for real-time vehicle intelligence.

- Broadcom Inc.: Broadcom designs custom AI accelerators and ASICs used in data centers, networking, and hyperscale computing. Its solutions optimize low-latency data movement, inference acceleration, and connectivity, serving top-tier AI infrastructure providers.

- Graphcore Ltd.: Graphcore develops Intelligence Processing Units (IPUs) purpose-built for parallel AI computation and deep learning. Its IPU systems deliver high-speed performance for training and inference, with an emphasis on data flow architecture that accelerates next-generation AI workloads.

- Cerebras Systems: Cerebras designs the Wafer-Scale Engine (WSE), the largest single AI processor in the world, purpose-built for deep learning and large language model training. Its integrated system architecture provides unprecedented compute density and bandwidth, reducing training time for massive AI models.

Recent Developments

- In October 2025, Intel announced plans to launch a new AI chip to rival Nvidia and AMD. The company highlighted its unique architecture focused on real-time AI processing efficiency and broad enterprise and consumer applications.(Source: https://timesofindia.indiatimes.com)

- In June 2025, Samsung launched the Galaxy Book4 Edge AI PC in India, featuring Qualcomms Snapdragon X processor and Microsoft Copilot. The device redefines AI-powered productivity with intelligent task management and energy-efficient performance.(Source: https://news.samsung.com)

- In September 2025, SiPearl introduced the Athena1 processor, its first high-performance European microchip. Designed for supercomputing and AI workloads, it aims to strengthen Europe technological independence and energy-efficient computing capabilities.(Source: https://www.hpcwire.com)

- In July 2025, Ambient Scientific launched an AI-native processor for edge devices. The chip delivers high-speed, low-power AI inference capabilities, enabling real-time processing for smart IoT, industrial automation, and mobile applications.(Source: https://www.bisinfotech.com)

Exclusive Insights

From an analysts perspective, it is clear the AI processor market is set for substantial growth, particularly as organizations are accelerating the adoption of digital transformation, and edge intelligence technologies. The increasing adoption of AI workloads in data centers, autonomous and robotics systems, and consumer electronics is driving demand for high-performance, energy-efficient processors.

However, significant challenges remain, including rising costs for chip fabrication, an ongoing shortage of chips in some categories, and a reliance on advanced foundries and manufacturing processes that are demanding and complex.

Moreover, there is competitive pressure from custom chips manufacturers, who are developing optimized architectures for specialized and emerging AI workloads. Regardless, there continues to be opportunities in neuromorphic-based computing and software, general AI, AI-on-device processing, and quantum-capable chips. Creating and maintaining collaborations and partnerships across the ecosystem will be critical to build innovation and ultimately sustaining long-term viability within the AI processor landscape.

AI Processor Market Segments Covered in the Report

[[segment_covered]]

For inquiries regarding discounts, bulk purchases, or customization requests, please contact us at [email protected]

Frequently Asked Questions

Tags

Ask For Sample

No cookie-cutter, only authentic analysis – take the 1st step to become a Precedence Research client

Get a Sample

Get a Sample

Table Of Content

Table Of Content

+1 804-441-9344

+1 804-441-9344

Schedule a Meeting

Schedule a Meeting